Artificial Intelligence & Data Science

Smarter Sensing & Radar with AI-Driven Engineering

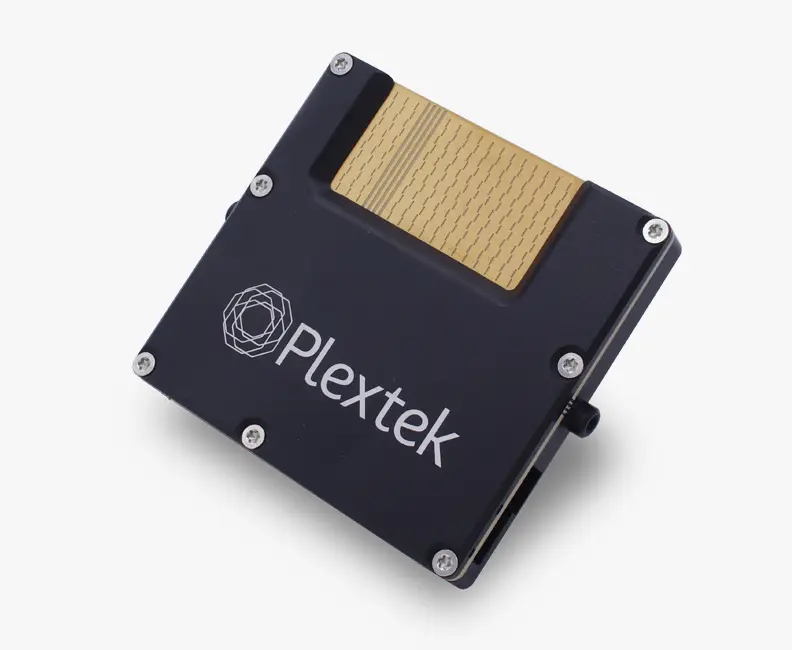

AI is revolutionising sensing and radar technology, providing new levels of accuracy, efficiency, and reliability. At Plextek, we combine 35+ years of engineering excellence with cutting-edge AI and data science to create next-generation sensing solutions that push the boundaries of performance. With our deep technical knowledge and hands-on approach, we help you integrate AI into your sensing and radar systems. This ensures smarter, faster, intuitive technology for industries where performance is critical.

From radar systems that detect threats with greater precision to sensor networks that adapt intelligently to their environment, our expertise in AI-driven signal processing, sensor fusion, and predictive analytics can help businesses enhance safety, reliability, and real-time decision making.

Machine Learning

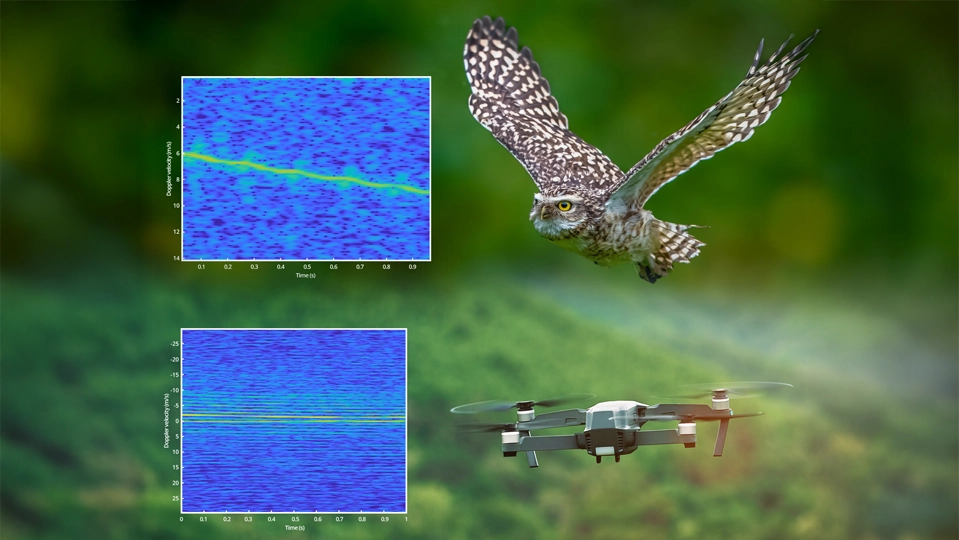

We apply AI-driven algorithms to detect patterns, predict anomalies, and improve decision-making in real-time. Our expertise helps radars and sensor networks adapt dynamically to changing environments, enhancing performance in defence, healthcare, industrial automation, and beyond.

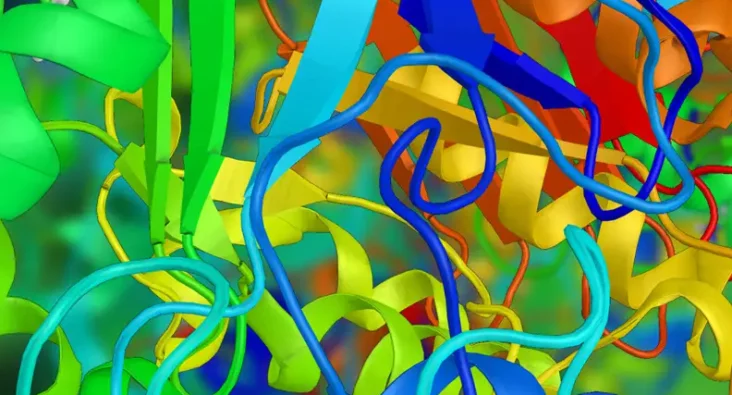

Machine Vision & Image Processing

We integrate sophisticated image processing techniques that enhance sensor capabilities. Our solutions improve object detection, classification, and tracking in complex scenarios, making sensing systems more responsive and accurate.

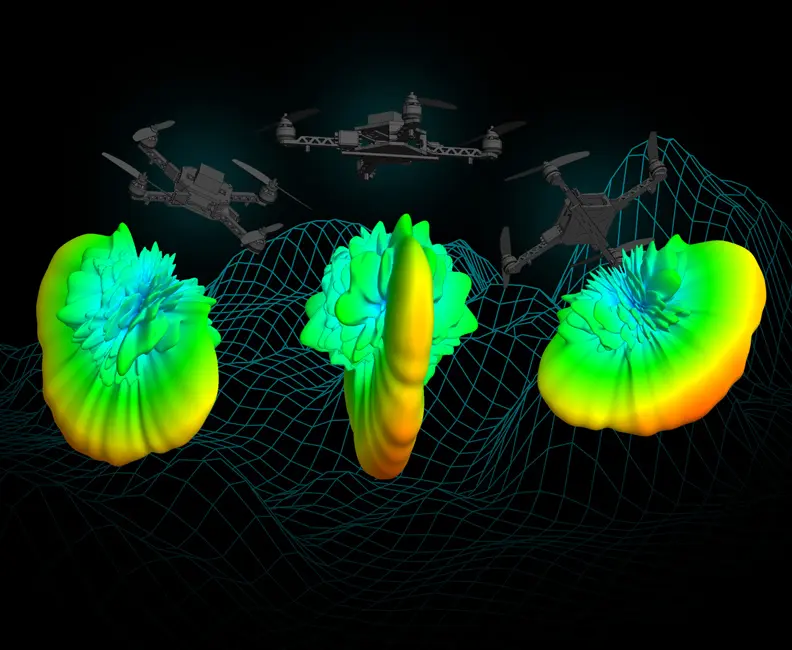

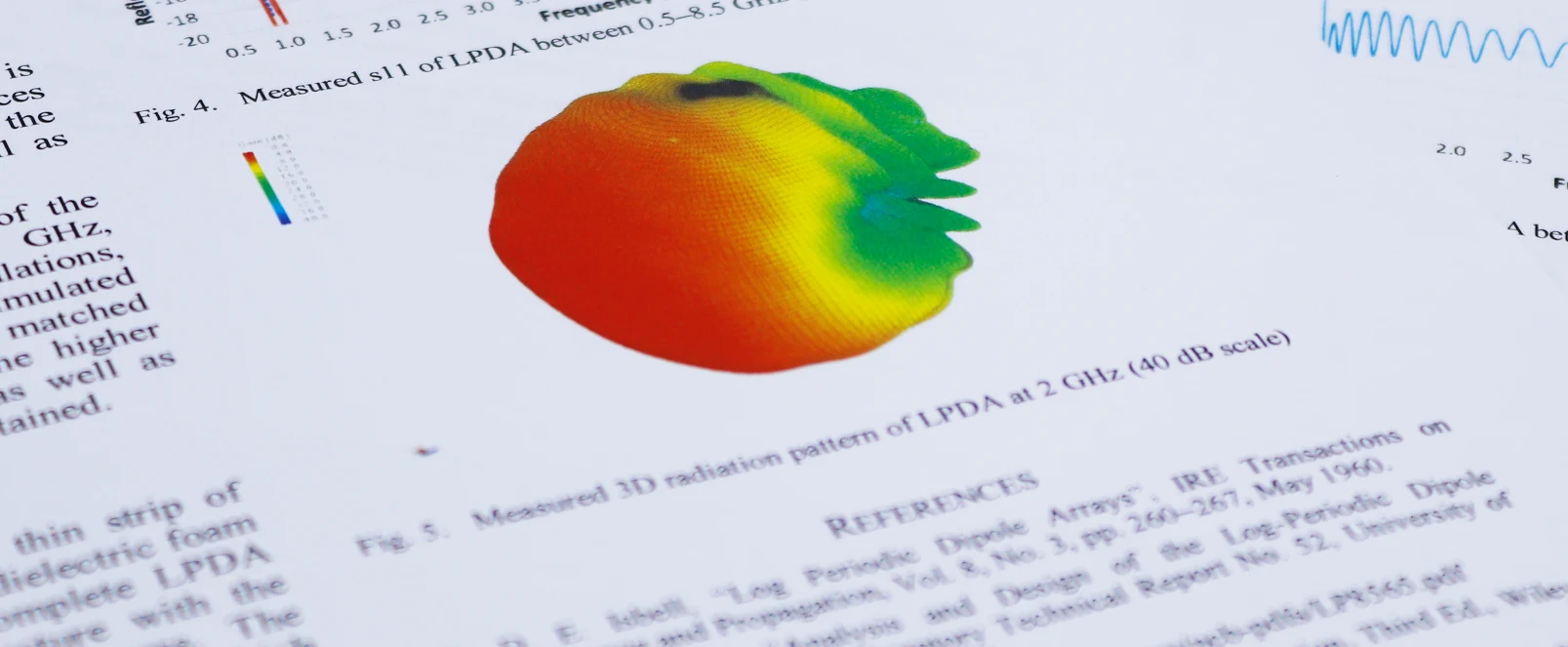

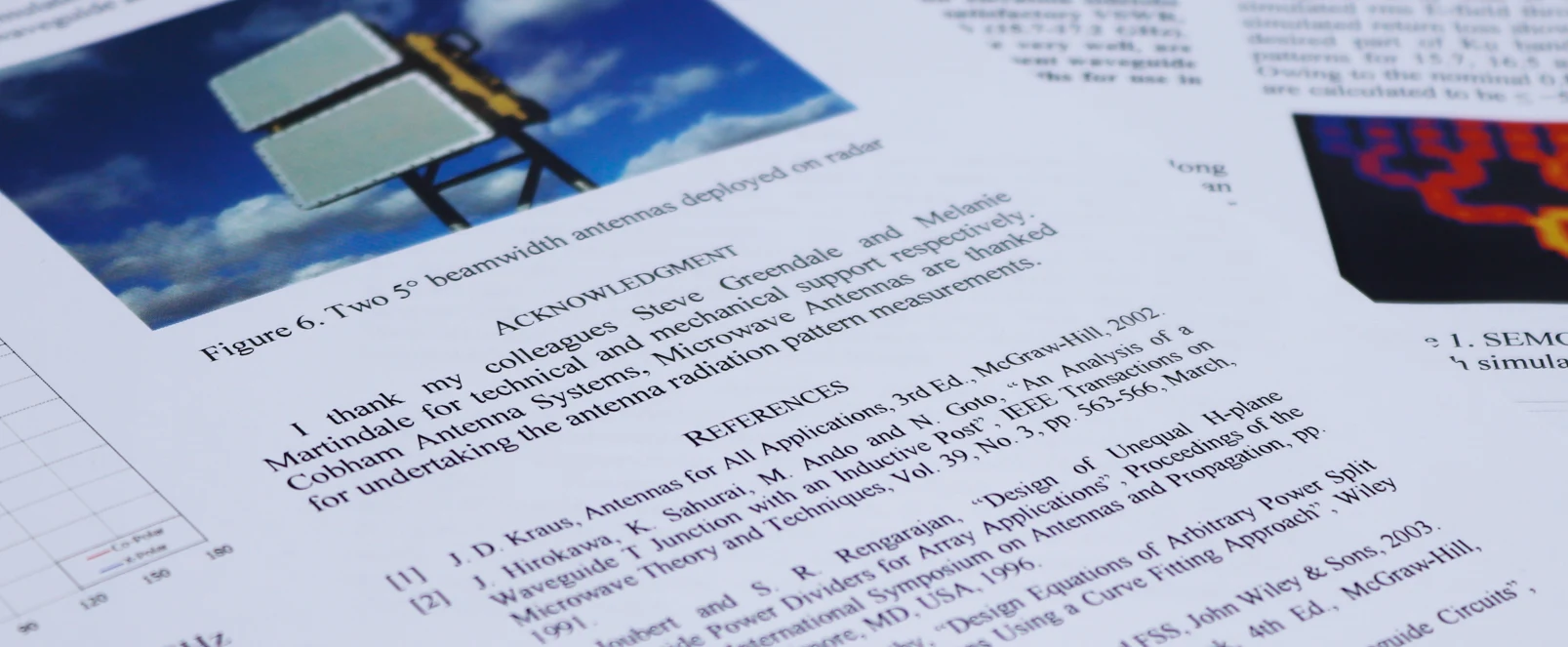

Modelling & Simulation

Mapping radio waves in seconds with the help of machine learning. Finding just the right location is crucial for cellular network operators when it comes to maximising the coverage of a new base-station.

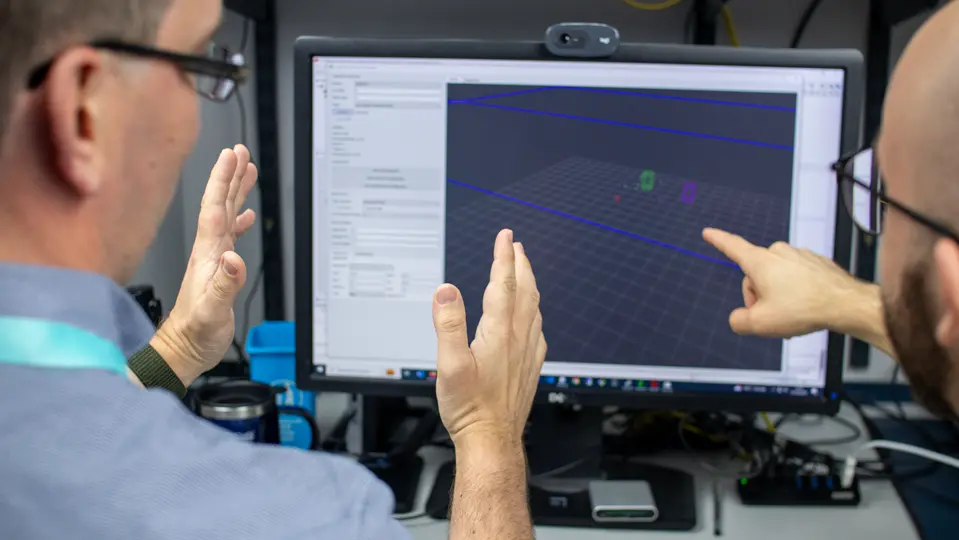

Sensor Fusion

Our expertise in AI-driven signal processing and sensor fusion enables us to combine data from multiple sensors, creating systems that can deliver superior environmental awareness and performance beyond what any single sensor can achieve.

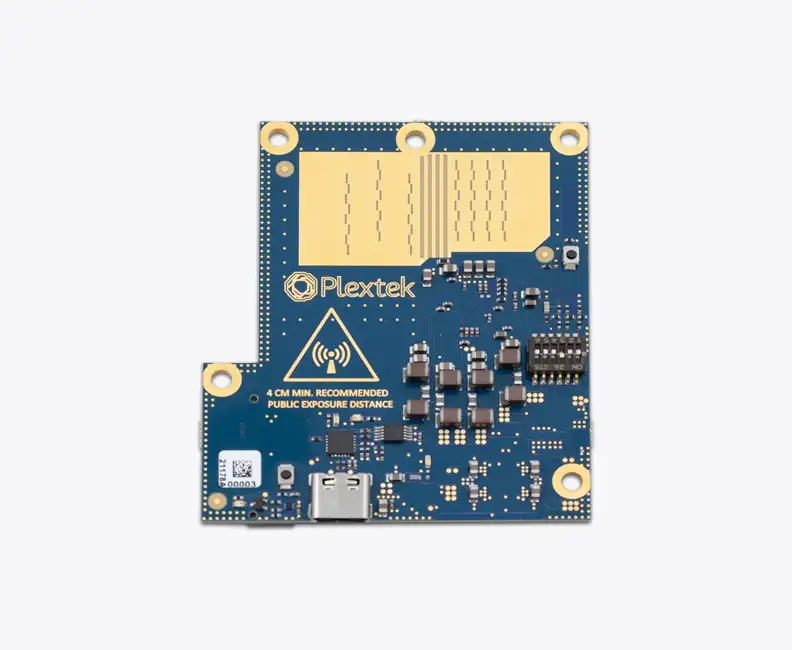

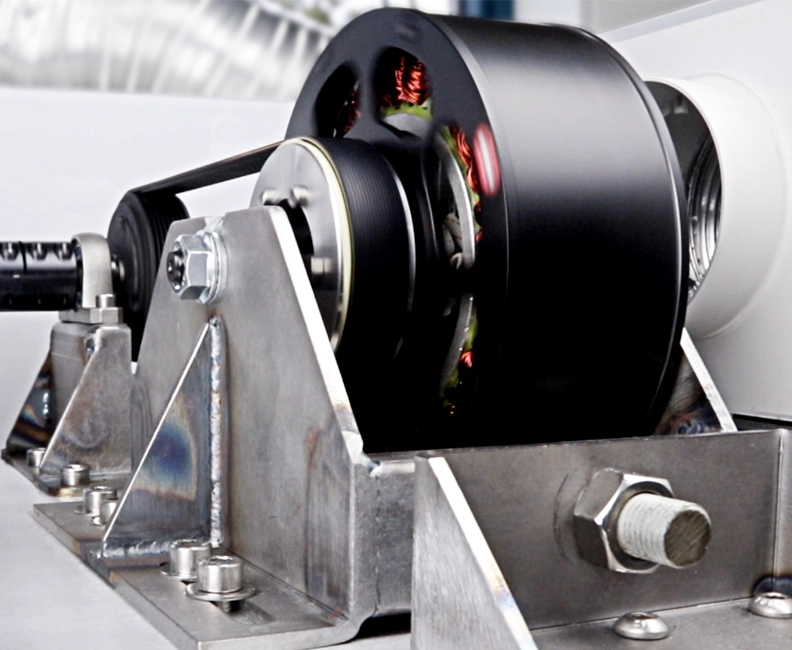

Embedded Intelligence

Our edge AI solutions enable real-time, low-latency decision making without reliance on the cloud. By embedding intelligence directly into radar and sensing hardware, we provide faster, more efficient, and resilient systems for mission-critical applications.

Why Choose Plextek?

- Over 35 Years of Expertise: A trusted name in engineering innovation, Plextek has a proven track record of delivering data science solutions for industry leaders.

- Multi-Disciplinary Team: Our engineers, data scientists, and AI specialists work collaboratively to deliver highly customised and scalable AI implementations.

- End-to-End Development: From concept to deployment, we provide full lifecycle support, ensuring seamless integration of AI into your products.

- Real-World Success: We have successfully developed AI-driven products across industries, from healthcare and defence to industrial automation and consumer electronics.

As the coordinating partner, we were impressed by Plextek's expertise in data fusion. The team effectively researched standards and demonstrated a solid understanding of data fusion concepts, supporting the customer's needs in deconstructing standard sets. Despite challenging time constraints, Plextek delivered a well-constructed and valuable report that exceeded expectations. We look forward to further collaboration in developing data fusion architecture with Plextek.

Thomas Howe

Senior Principal Navigation and Seamanship, BMT